Deterministic ground

truth from video.

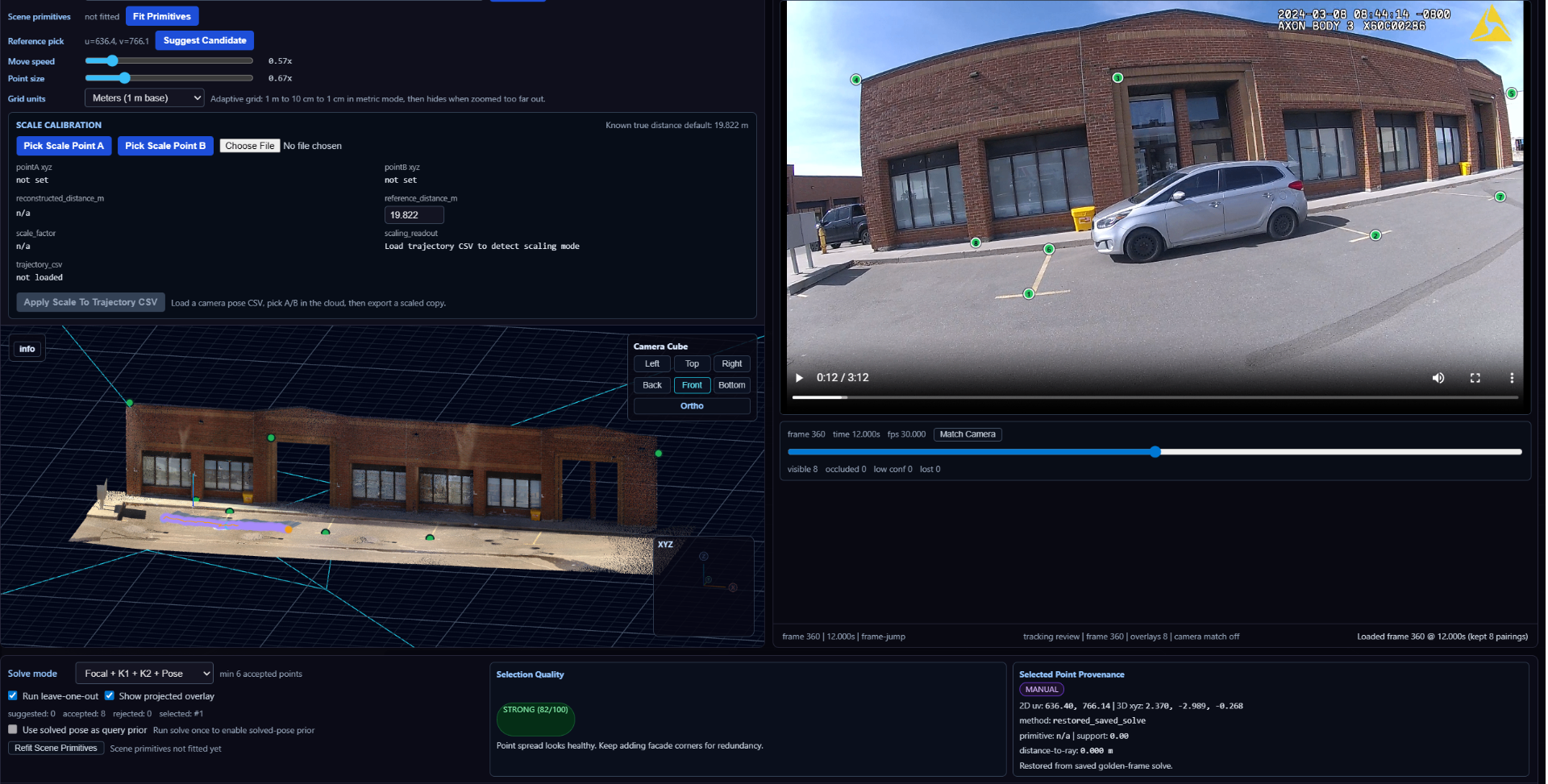

Tattva3D converts monocular video and known scene constraints into auditable camera poses, trajectories, and speed estimates for forensic analysis and liability determination.

Working components of the current analysis pipeline.

Lens Validation

Intrinsics and distortion are solved from measured 2D ↔ 3D correspondences and checked by reprojection residuals on held-out points before any downstream estimate is used.

Camera Trajectory Recovery

Per-frame pose is recovered from tracked image points and known scene geometry. Each frame's solution is independent and inspectable on its own merits.

Constrained Motion Analysis

Velocities and trajectories are derived in scene-aligned units from constrained motion, with explicit uncertainty — never a single unqualified number.

Reviewable Runs

Inputs, parameters, and intermediates are logged so a run can be reproduced and challenged step by step from the audit manifest.

Scene-constrained human motion from monocular video.

An early prototype recovering an individual's motion from a single video and re-expressing it inside the same constrained scene geometry used for camera and vehicle estimates. The human figure is anchored to measured ground and the recovered camera — not free-floating.

Note the vehicles in the background: they jitter frame-to-frame because, in this clip, they are tracked without a metric anchor of their own. That instability is the exact failure mode the next prototype addresses — by constraining each vehicle to scene geometry and known dimensions, rather than letting it drift.

Exploratory R&D, not a shipped capability. Shown to illustrate the direction: extending the same scene-constrained, inspectable approach from vehicles to people.

Semantic vehicle masking for constrained speed estimation.

The direct response to the background jitter seen in the previous clip. Here, the vehicle is segmented per frame and locked into the same scene-aligned 3D environment, with the vehicle's known physical dimensions acting as a metric anchor. The bounding box stays dimensionally consistent across frames instead of drifting.

That dimensional anchoring is what turns per-frame masks into a defensible speed reading: motion is derived from constrained, measurable inputs rather than an unconstrained track.

Exploratory R&D — not a validated speed measurement. Shown to make the working approach visible.

Many video-to-3D systems infer geometry the camera never observed. In a forensic context, an inferred surface is not evidence — it is an assumption presented as a measurement.

Tattva3D works the other way. Every estimate is anchored to a calibrated lens, tracked image points, and scene geometry that can be measured or surveyed. Nothing downstream is computed from unseen structure, and every intermediate remains open to inspection.

The output is not a guessed scene. It is an inspectable chain from evidence to measurement.

Forensic engineers

Recover scene-aligned measurements from video evidence with a defensible computational chain.

Accident reconstruction teams

Pair video with survey or scan data to constrain trajectories and speeds without proprietary scene reconstruction.

Insurers and liability teams

Inspect, reproduce, and challenge measurements without relying on a black-box estimate.

- —Not a generative video-to-3D toy

- —Not a photogrammetry replacement

- —Not a black-box “AI says the speed was X” system

- —Not optimized for visuals before mathematical validity

Work today centers on constrained motion recovery from monocular video. Each component below is held to the same requirement: the result must be reproducible from a logged manifest by an outside reviewer.

- F.01Lens calibration

- F.02Tracked points

- F.03Per-frame camera pose recovery

- F.04Constrained motion analysis

- F.05Speed estimation

- F.06Auditability and rerunability